The site is ready for work, launched, but the traffic is "0". It doesn't show up in Google search results. Most likely, this problem is easily solved. Consider 4 reasons why the site is not in the search results and tips on how to speed up its indexing.

The site is not indexed

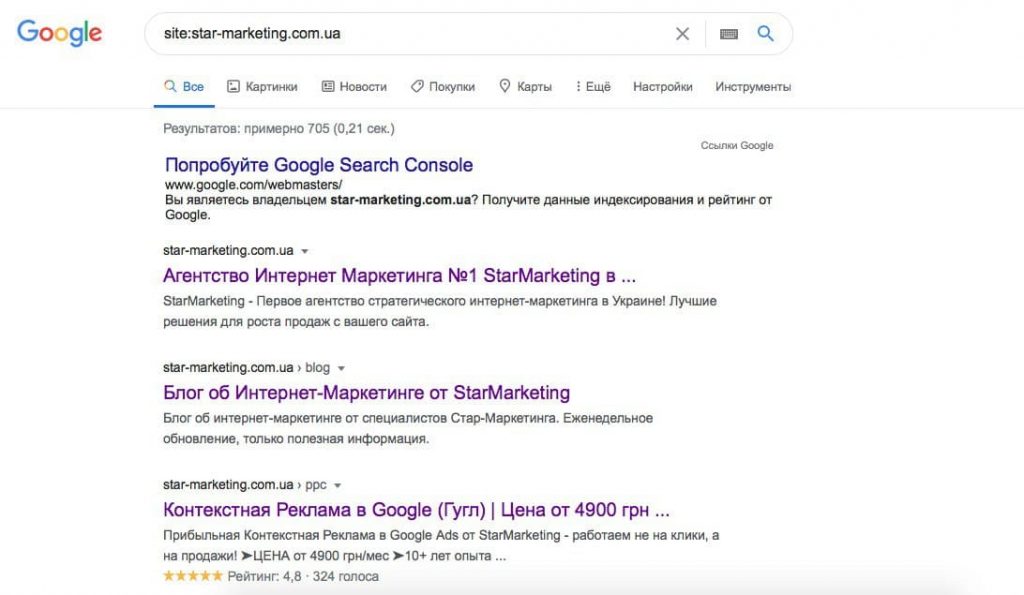

Search robots discover a new web resource by following links and register it in the database. Find out if your site has been indexed by typing "site:site name" without the space after the colon in the search. For example: site:star-marketing.com.ua. All indexed pages of the site will appear in the results of the issuance.

The site will get into the database faster through the service "Google Search Console". Register, confirm the rights to the site, send requests for indexing the necessary pages. In the webmaster dashboard, track error notifications, recommendations, indexing status, conversion statistics.

To manually submit a page for indexing, enter the page address in the URL Check box.

After a while, the submitted page should appear in the Google search results.

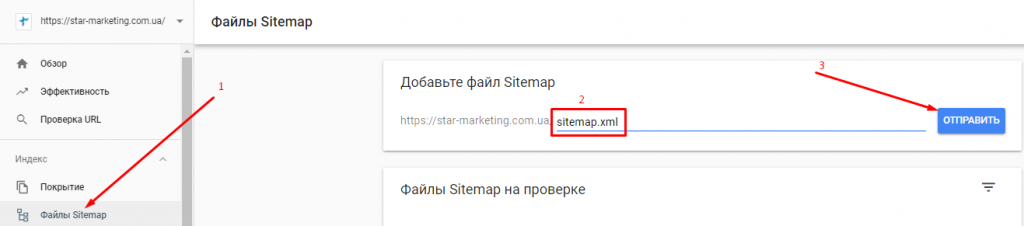

Algorithms will quickly scan the online site using the sitemap for search robots. Add internal links to your sitemap for each page you want indexed. Do not use links that appear after user actions (site search results, filters, etc.). Search robots should not index them. The sitemap is located at sitemap.xml. For example: https://star-marketing.com.ua/sitemap.xml. Submit it to Google Search Console:

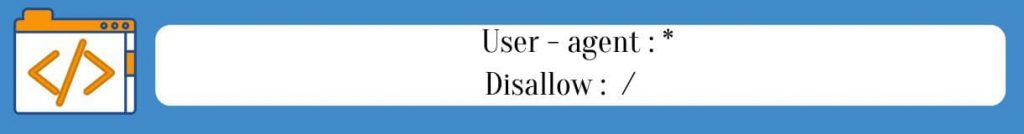

Prohibition of indexing in the robots.txt file

Developers add the robots.txt file to the root directory, which regulates the visit of web pages by robots. They are closed for indexing if it has rows:

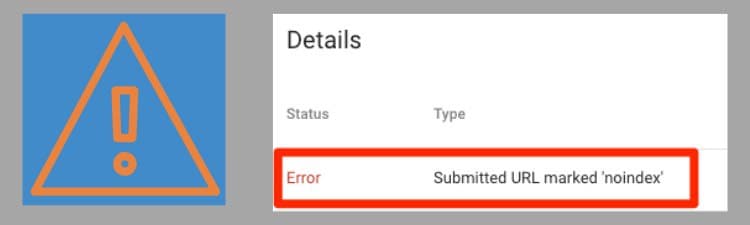

When the work on the site is completed, the webmaster may forget to remove the ban, and the system will not see it. Check if Tracking Prevention is active: Submit your site file to Google Search Console. If the algorithms scanned it and found a blocking, look for the error in the "URL Check - Coverage" report: "Submitted URL blocked by robots.txt".

Found it on your platform? We recommend contacting professionals. They will correctly disable tracking. After eliminating robots.txt, submit a registration request to Google.

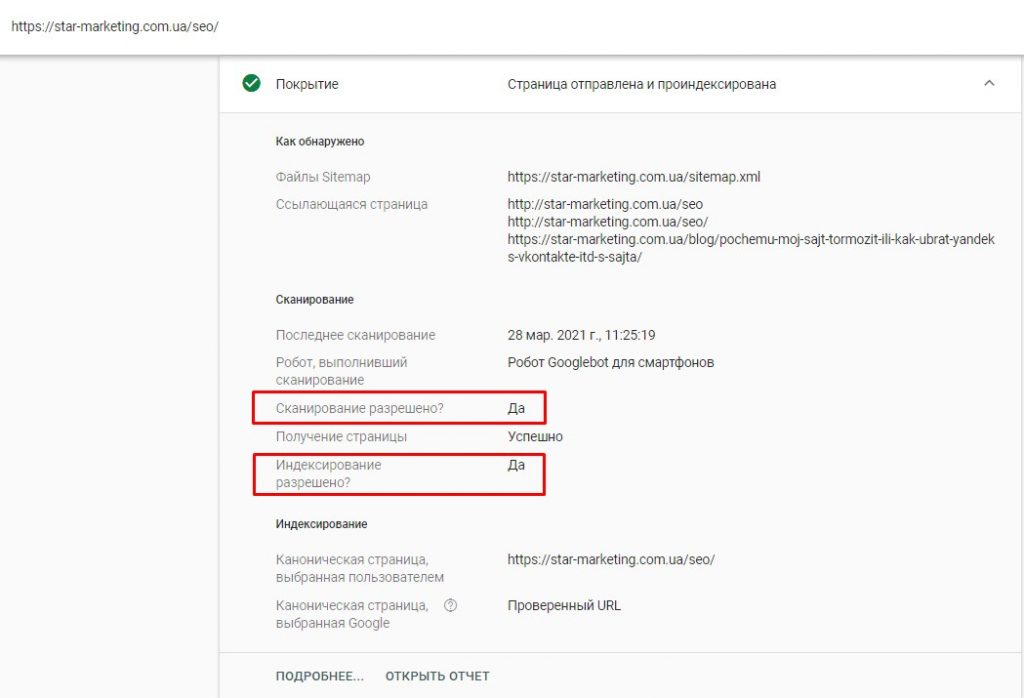

When the site is indexed, the "URL Check - Coverage" report will show a corresponding checkmark and indicate that crawling is allowed:

Page indexing blocking in WordPress

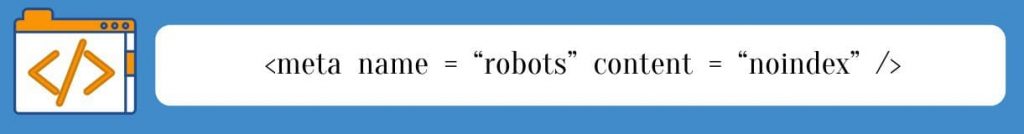

Such a ban makes pages invisible to algorithms, which is necessary in the process of layout or testing. They won't show up in SERPs even if you submit your sitemap to Google.

The code looks like this:

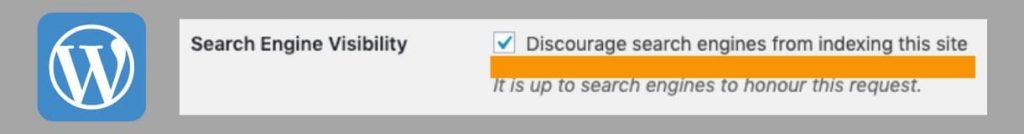

WordPress automatically adds the "noindex" meta tag to HTML to disable indexing if this option is enabled in the "Search Engine Visibility" section:

The checkbox in this block is set during the development of the site so that users do not visit it. When the work is completed, this option is often forgotten to be disabled. Find out if there is a no-crawl notice on the Google Search Console report page:

Sanctions from Google

This is the least likely reason why the site is not in the search results. If it meets the requirements of the system and algorithm changes are taken into account, no filters are applied. You can learn more about search engine sanctions in our article. Trust the correction of such problems to experienced webmasters!

Improving the site for indexing

When developing a web resource, take into account the requirements of Google and the features of the algorithms. SEO site optimization helps to bring the site in line with them. Comprehensive tuning of the parameters that affect search engine registration and ranking is required.

Consider several factors that speed up the registration of an online platform:

- Web page language. HTML is indexed better than Java or AJAX.

- External links. If a reputable source links to your site, Google will find it faster.

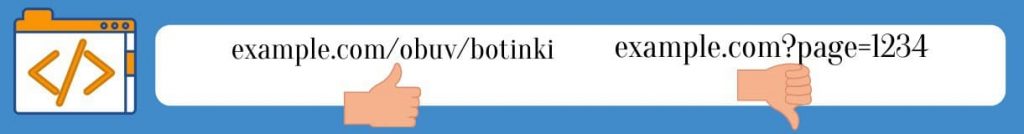

- The page name in the URL path (NC) is easier for algorithms to find than URL parameters:

- SEO-optimization is a set of works on the site for promotion to the TOP. Web pages that match search queries rank well. Key phrases in the content, description, URL show that the web resource contains information of interest to users. If they are not used, the site will not appear in the search results for these phrases.

- Pages that are identical in content. There are different ways to detect duplicate pages. One of them is Ashrefs Site Audit Online Service. On the detected duplicates, you need to specify the canonical page (main) using the rel="canonical" attribute, or set up a redirect to the desired page (redirect 301).

- Non-unique content. Google does not support plagiarism. Publish texts on the site with a uniqueness of at least 95 %. Add custom images.

- Slow site loading speed. This happens if "heavy" images and videos are used, complex animation, the web platform and hosting have a small resource. Compress images, change caching options, and disable unnecessary plugins. This will speed up the site. If you need a deeper optimization of download speed, contact the professionals.

Often there is no indexing if a sitemap is not sent to Google or there is a block in robots.txt in the page code. These problems are easy to fix. If search engine filters are applied, contact specialists for troubleshooting so as not to aggravate the situation with erroneous actions. Work on the quality of the site speeds up indexing and promotes rapid promotion to the TOP.